This is the ninth installment of describing a radically more productive development and delivery environment.

The first part is here: Intro. In the previous parts I described the big picture, Vagrant and EC2, node initialization, the one minute configuration HeartBeat, HipChat integration, presence, EC2, and configuring nodes.

Using Presence for configuration

So far, presence has been just information that ‘humans’ consume. It shows up on dashboards, in chat rooms, and so on, but nothing has acted upon it. Until now!

We have ‘app’ and ‘db’ nodes. Clearly the ‘app’ nodes need to find the ‘db’ nodes or the app is not going to be able to persist much. The ‘db’ here happens to be ‘Maria’ but it could be anything from a single DB node to a cluster of Riak nodes. At the moment, I just want to get the information that a ‘db’ node knows (“I exist!”, “My IP is this!”) over to the ‘app’ nodes so they can process it.

It is already there?

But wait? All nodes have ‘repo4’ for writing? Don’t they already have everything in ‘repo4’ for reading as well?

And so they do. The presence information is already there waiting patiently for somebody or someboty to read it.

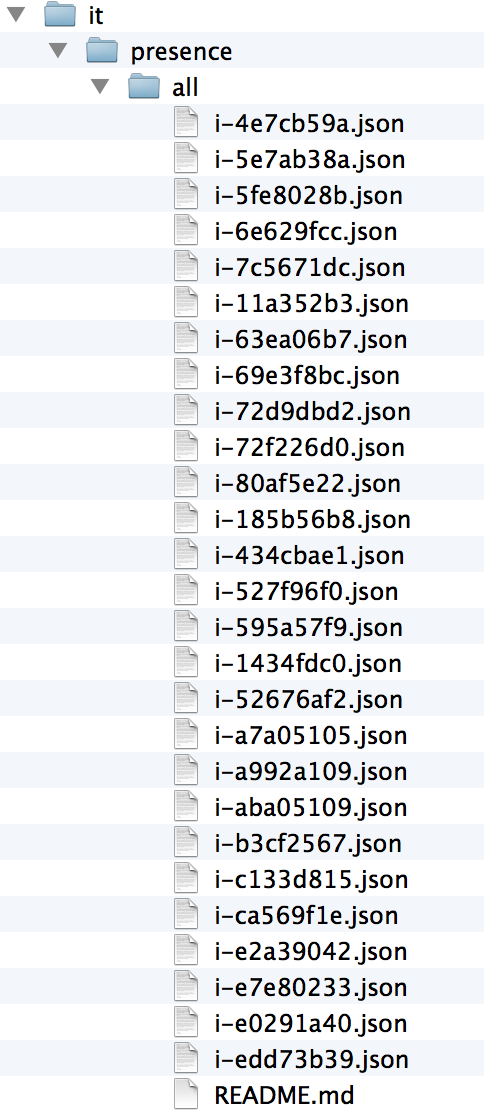

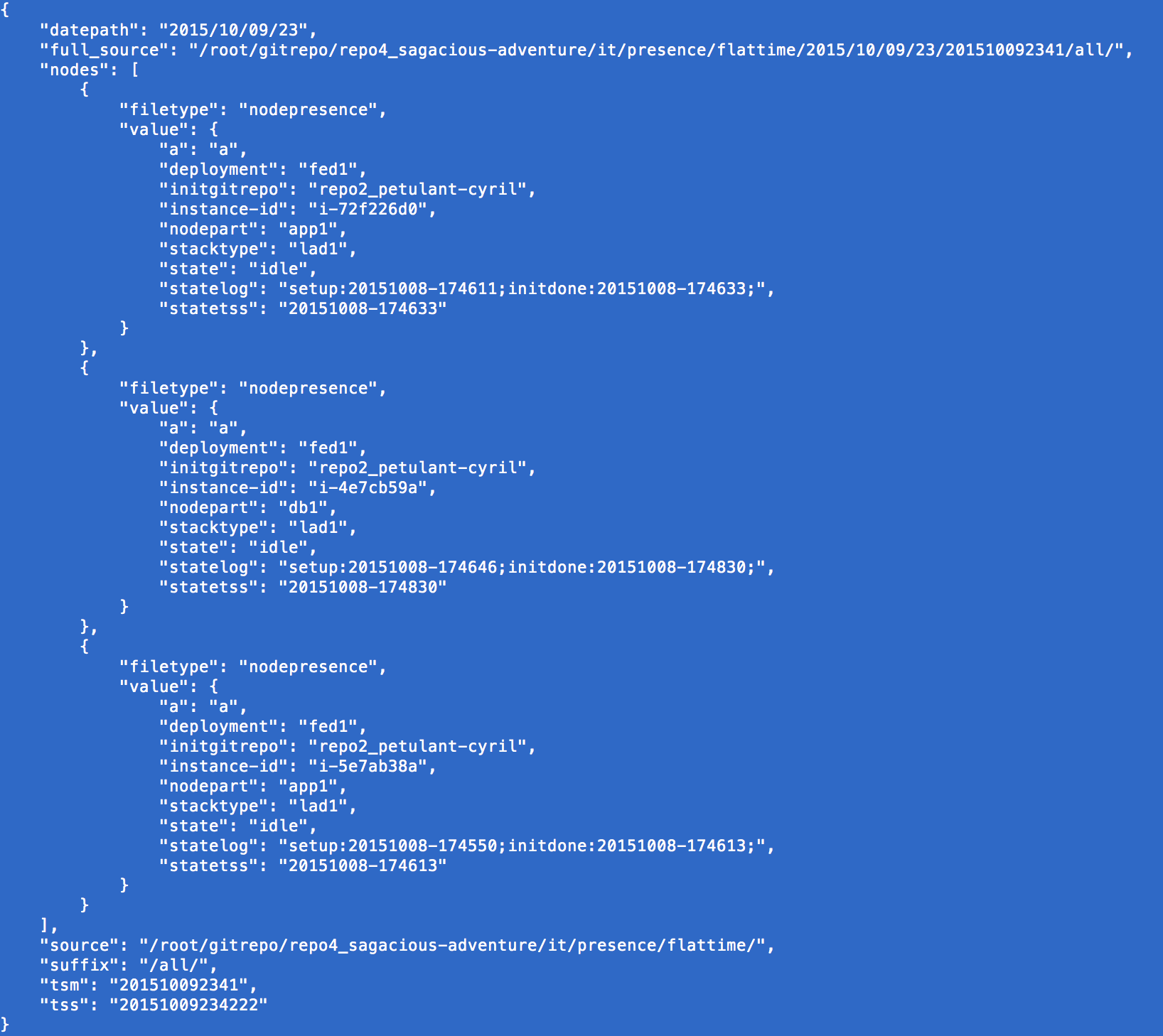

As of this writing, repo4 looks like this:

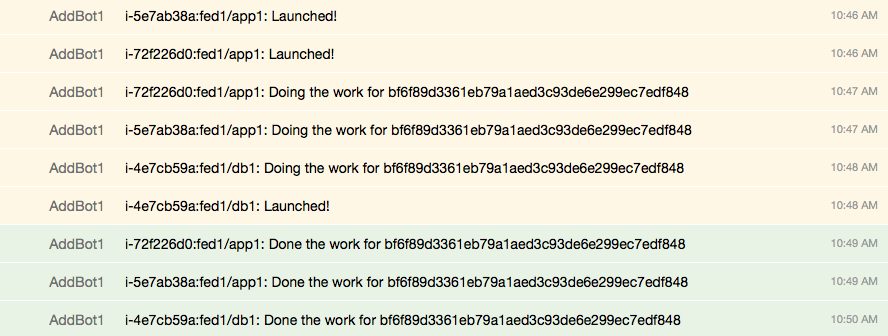

And the live hipchat still looks like this:

So the DB server is definitely there:

1

| |

And the information is there:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 | |

So the only issue is for nodes to ‘find their partners’

Finding the partners

By the HipChat there are only three nodes alive, so problem one is finding live nodes vs. dead nodes. There are two levels to that:

- Finding plausibly live nodes

- Finding truly awake nodes

The simplest approach to the first is to make sure there is some kind of heartbeat within ‘statetss’. Every-minute is clearly possible, but a bit noisy if done in the main part of repo4. It would be nice to not to see the heartbeat block out the actual state change information that is already there. An interesting alternative is to ‘flatten time’ and have an alternative branch that stores information as ‘it/presence/flattime/timestamp’. Or given we are storing the information differently, we could use the main branch and just change the comment to mention ‘flattime’. Yes, the information in the files would not change very often. But Git stores files separate from paths, so there is very little overhead to adding new paths to identical files.

Time flattened

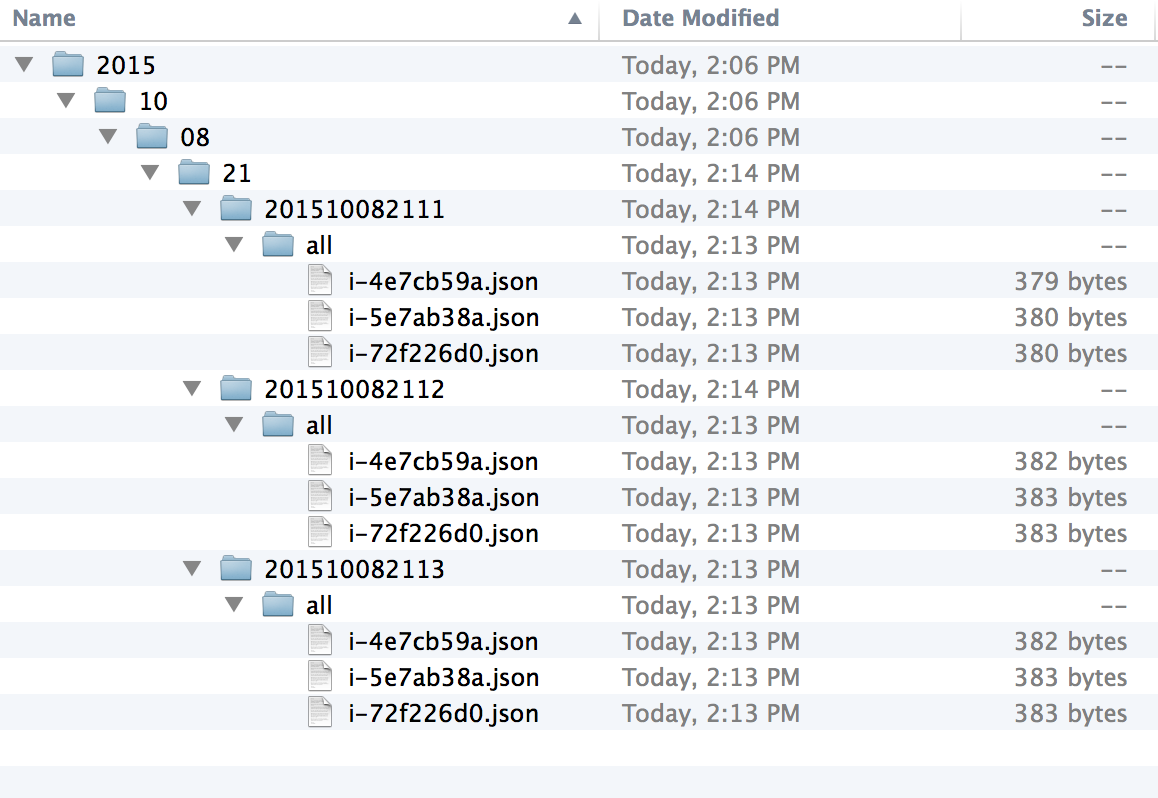

repo4 has folder under ‘presence’ called ‘flattime’ which contains the presence information where every checkin is a new path. To keep things manageable the timestamp is complete ‘YYYYMMDDHHMM’, but year/month/day/hour/ is used to organize it. Seconds are not used because we want everything to be together and there is no guarantee the seconds would match between machines.

Looking at a few minutes of time, we get something like this:

The files change a bit because the machines are switching states and at the ‘capture’ moment could be in almost any state of their ‘state machine’.

How precise?

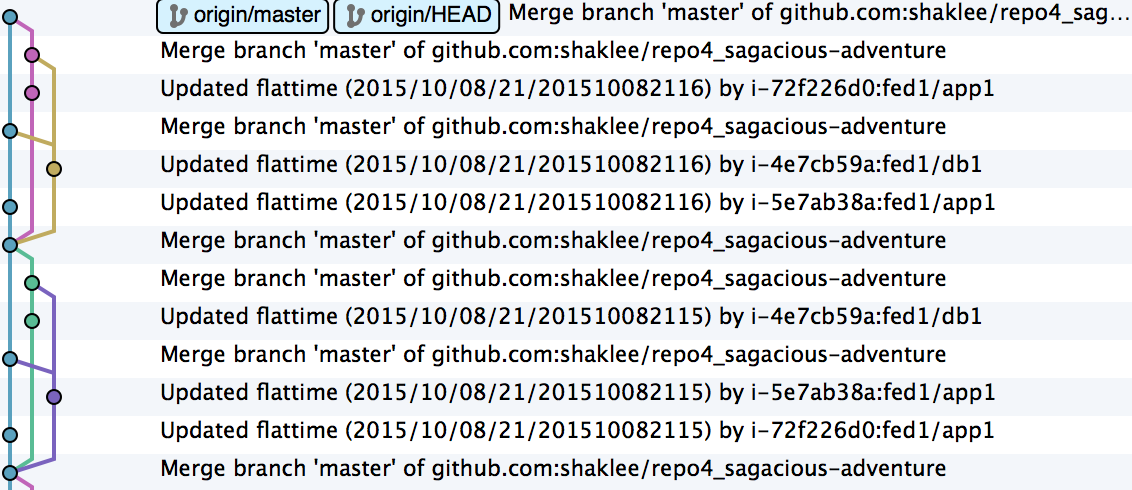

So this is quite precise in time and you could certainly slow it down. Sometimes providers get annoyed if you use git this way, but the software itself is thoroughly comfortable with it. You can get some impressive merge graphs as the machine count goes up.

So making things less often and more jittered will help alleviate some stress. Since this is only the first stage of presence (what exists and is plausibly alive), we will deal with stale data in the second stage.

Who is what?

So we have a directory of JSON files and we want to find certain kinds of partners. It is simplest to just run through all the files and see what is inside them. The files can be loaded one-by-one or concatenated together into a working temporary file.

A basic python script could look like this:

buildLiveServerJson.py

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 | |

Our first stage is to get a list of plausible nodes and store that:

1

| |

Next we can filter out the ones that don’t respond to our ‘awake’ check. That leaves us with one or more remaining. Depending on the kind of system you may actually want to know a bunch of nodes vs. just one (e.g. ZooKeeper).